With just a little bit of tweaking and improving your Postgres SQL queries, you can cut down on the amount of repetitive, error-prone application code required to interface with your database. More often that not, such a change also improves the performance of the application code too.

Here are a few tips and tricks that can help your application code outsource more work to PostgreSQL, and make your application slimmer and faster.

Upsert

Since Postgres v9.5, it is possible to specify what should happen when an insert fails because of a “conflict”. The conflict can either be a violation of a unique index (including a primary key) or any constraint (created earlier using CREATE CONSTRAINT).

This feature can be used for simplifying insert-or-update application logic into a single SQL statement. For example, given a table kv with key and value columns, the statement below will insert a new row (if the table does not have a row with key=’host’) or update the value (if the table has a row with key=’host’):

CREATE TABLE kv (key TEXT PRIMARY KEY, value TEXT);

INSERT INTO kv (key, value)

VALUES ('host', '10.0.10.1')

ON CONFLICT (key) DO UPDATE SET value=EXCLUDED.value;

Note that the column key is the single-column primary key of the table, and is specified as the conflict clause. If you have a primary key with multiple columns, specify the name of the primary key index here instead.

For advanced examples, including specifying partial indexes and constraints, see the Postgres docs.

Insert .. returning

The INSERT statement can also return one or more rows, like a SELECT statement. It can return values generated by functions, keywords like current_timestamp and serial/sequence/identity columns.

For example, here is a table with an autogenerated identity column and a column that holds the timestamp of creation of the row:

db=> CREATE TABLE t1 (id int GENERATED BY DEFAULT AS IDENTITY,

db(> at timestamptz DEFAULT CURRENT_TIMESTAMP,

db(> foo text);

We can use the INSERT .. RETURNING statement to specify only the value for the column foo, and let Postgres return the values it generated for the id and at columns:

db=> INSERT INTO t1 (foo) VALUES ('first'), ('second') RETURNING id, at, foo;

id | at | foo

----+----------------------------------+--------

1 | 2022-01-14 11:52:09.816787+01:00 | first

2 | 2022-01-14 11:52:09.816787+01:00 | second

(2 rows)

INSERT 0 2

From application code, use the same patterns/APIs you’d use to run SELECT statements and read in values (like executeQuery() in JDBC or db.Query() in Go).

Here is another example, this one has an auto-generated UUID:

CREATE TABLE t2 (id uuid PRIMARY KEY, foo text);

INSERT INTO t2 (id, foo) VALUES (gen_random_uuid(), ?) RETURNING id;

Similar to INSERT, the UPDATE and DELETE statements can also contain RETURNING clauses in Postgres. The RETURNING clause is a Postgres extension, and not part of the SQL standard.

Any in a set

From the application code, how would you create a WHERE clause that needs to match a column’s value against a set of acceptable values? When the number of values are known before hand, the SQL is static:

stmt = conn.prepareStatement("SELECT key, value FROM kv WHERE key IN (?, ?)");

stmt.setString(1, key[0]);

stmt.setString(2, key[1]);

But what if the number of keys are not 2 but can be any number? Would you construct the SQL statement dynamically? An easier option is to use Postgres arrays:

SELECT key, value FROM kv WHERE key = ANY(?)

The ANY operator above takes an array as argument. The clause key = ANY(?) selects all rows where the value of key is one of the elements of the supplied array. With this, the application code can be simplified to:

stmt = conn.prepareStatement("SELECT key, value FROM kv WHERE key = ANY(?)");

a = conn.createArrayOf("STRING", keys);

stmt.setArray(1, a);

This approach is feasible for a limited number of values, if you have a lot of values to match with, consider other options like joining with (temporary) tables or materialized views.

Moving rows between tables

Yes, you can delete rows from one table and insert them into another with a single SQL statement! A main INSERT statement can pull in the rows to insert using a CTE, which wraps a DELETE.

WITH items AS (

DELETE FROM todos_2021

WHERE NOT done

RETURNING *

)

INSERT INTO todos_2021 SELECT * FROM items;

Doing the equivalent in application code can be very verbose, involving storing the entire result of the delete in memory and using that to do multiple INSERTs. Granted, moving rows is maybe not a common use case, but if the business logic calls for it, the savings of application memory and database round trips presented by this approach make it the ideal solution.

The set of columns in the source and destination tables do not have to be identical, you can of course reorder, rearrange and use functions to manipulate the values in the select/returning lists.

Coalesce

Handing NULL values in application code usually takes extra steps. In Go, for example, you’d need to use types like sql.NullString; in Java/JDBC, functions like resultSet.wasNull(). These are cumbersome and error-prone.

If it is possible to handle, say NULLs as empty strings, or NULL integers as 0, in the context of a specific query, you can use the COALESCE function. The COALESCE function can turn NULL values into any specific value. For example consider this query:

SELECT invoice_num, COALESCE(shipping_address, '')

FROM invoices

WHERE EXTRACT(month FROM raised_on) = 1 AND

EXTRACT(year FROM raised_on) = 2022

which gets the invoice numbers and shipping addresses of invoices raised in Jan 2022. Presumably, shipping_address is NULL if goods do not have to be shipped physically. If the application code simply wants to display an empty string somewhere in such cases, say, it is simpler just to use COALESCE, and to remove NULL-handling code in the application.

You can also use other strings instead of an empty string:

SELECT invoice_num, COALESCE(shipping_address, '* NOT SPECIFIED *') ...

You can even get the first non-NULL value from a list, or use the specified string instead. For example to either use the billing address or the shipping address, you can use:

SELECT invoice_num, COALESCE(billing_address, shipping_address, '* NO ADDRESS GIVEN *') ...

Case

CASE is another helpful construct to deal with real-life, imperfect data. Let’s say rather than having NULLs in shipping_address for non-shippable items, our not-so-perfect invoice creation software has put in “NOT-SPECIFIED”. You’d like to map this to a NULL or an empty string when you read in the data. You can use CASE:

-- map NOT-SPECIFIED to an empty string

SELECT invoice_num,

CASE shipping_address

WHEN 'NOT-SPECIFIED' THEN ''

ELSE shipping_address

END

FROM invoices;

-- same result, different syntax

SELECT invoice_num,

CASE

WHEN shipping_address = 'NOT-SPECIFIED' THEN ''

ELSE shipping_address

END

FROM invoices;

CASE has an ungainly syntax, but is functionally similar to switch-case statements in C-like languages. Here is another example:

SELECT invoice_num,

CASE

WHEN shipping_address IS NULL THEN 'NOT SHIPPING'

WHEN billing_address = shipping_address THEN 'SHIPPING TO PAYER'

ELSE 'SHIPPING TO ' || shipping_address

END

FROM invoices;

Select .. union

Data from two (or more) separate SELECT statements can be combined using UNION. For example if you have two tables, one holding current users and one deleted, here’s how to query them both at the same time:

SELECT id, name, address, FALSE AS is_deleted

FROM users

WHERE email = ?

UNION

SELECT id, name, address, TRUE AS is_deleted

FROM deleted_users

WHERE email = ?

The two queries should have the same select list, that is, should return the same number and type of columns.

UNION also removes duplicates. Only unique rows are returned. If you’d rather have duplicate rows retained, use “UNION ALL” instead of UNION.

Complimenting UNION, there is also INTERSECT and EXCEPT, see the PostgreSQL docs for more info.

Select .. distinct on

Duplicate rows returned by a SELECT can be combined (that is, only unique rows are returned) by adding the DISTINCT keyword after SELECT. While this is standard SQL, Postgres provides an extension, the “DISTINCT ON”. It’s a little tricky to use, but in practice it’s often the most concise way to get the results you need.

Consider a customers table with a row per customer, and a purchases table with one row per purchases made by (some) customers. The query below returns all customers, along with each of their purchases:

SELECT C.id, P.at

FROM customers C LEFT OUTER JOIN purchases P ON P.customer_id = C.id

ORDER BY C.id ASC, P.at ASC;

Each customer row is repeated for each purchase they’ve made. What if we want to return only the first purchase of a customer? We basically want to sort the rows by customer, group the rows by customer, within each group sort the rows by purchase time, and finally return only the first row from each group. It’s actually shorter to write that in SQL with DISTINCT ON:

SELECT DISTINCT ON (C.id) C.id, P.at

FROM customers C LEFT OUTER JOIN purchases P ON P.customer_id = C.id

ORDER BY C.id ASC, P.at ASC;

The added “DISTINCT ON (C.id)” clause does just what was described above. That’s a lot of work with just a few additional letters!

Using numbers in order by clause

Consider fetching a list of customer names and the area code of their phone numbers from a table. We’ll assume that US phone numbers are stored formatted as (123) 456-7890. For other countries, we’ll just say “NON-US” as the area code.

SELECT last_name, first_name,

CASE country_code

WHEN 'US' THEN substr(phone, 2, 3)

ELSE 'NON-US'

END

FROM customers;

That’s all good, and we’ve the CASE construct too, but what if we need to sort it by the area code now?

This works:

SELECT last_name, first_name,

CASE country_code

WHEN 'US' THEN substr(phone, 2, 3)

ELSE 'NON-US'

END

FROM customers

ORDER BY

CASE country_code

WHEN 'US' THEN substr(phone, 2, 3)

ELSE 'NON-US'

END ASC;

But ugh! Repeating the case clause is ugly and error-prone. We could write a stored function that takes country code and phone and returns the area code, but there is actually a nicer option:

SELECT last_name, first_name,

CASE country_code

WHEN 'US' THEN substr(phone, 2, 3)

ELSE 'NON-US'

END

FROM customers

ORDER BY 3 ASC;

The “ORDER BY 3” says order by the 3rd field! You’ve to remember to update the number when you rearrange the select list, but it’s usually worth it.

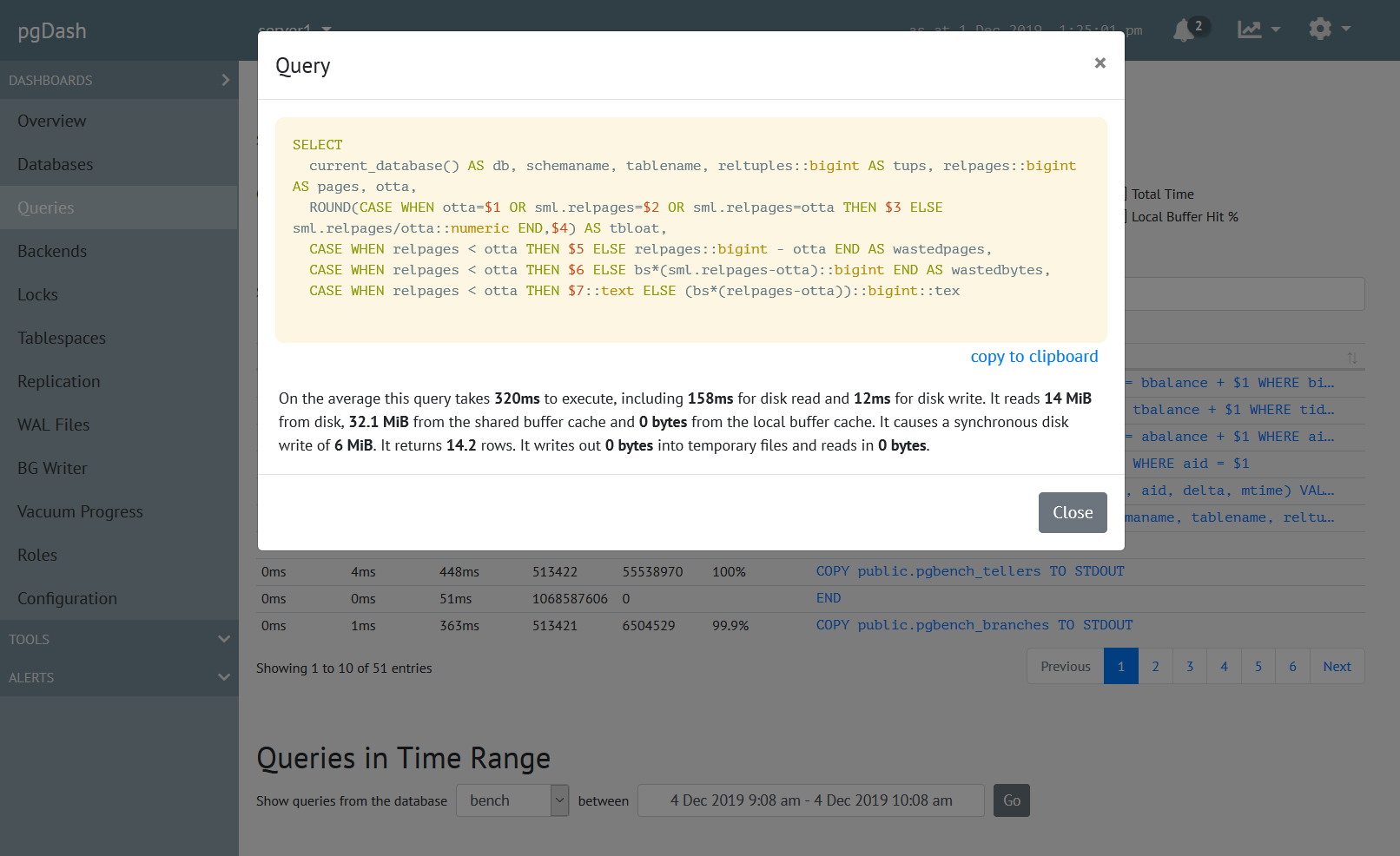

About pgDash

pgDash is a modern, in-depth monitoring solution designed specifically for PostgreSQL deployments. pgDash shows you information and metrics about every aspect of your PostgreSQL database server, collected using the open-source tool pgmetrics.

pgDash provides core reporting and visualization functionality, including collecting and displaying PostgreSQL information and providing time-series graphs, detailed reports, diagnostics, alerting, teams and more. Checkout the features here or signup today for a free trial.