Connection pooling is a simple but effective way to improve the performance of your apps and decrease the load on your PostgreSQL servers. Read on to learn more about using PgBouncer to pool PostgreSQL connections.

Why Connection Pooling?

PostgreSQL has a rather heavyweight connection handling architecture. For each incoming connection, the postmaster (the main Postgres daemon) forks out a new process (conventionally called a backend) to handle it. While this design provides better stability and isolation, it does not make it particularly efficient at handling short-lived connections. A new Postgres client connection involves TCP setup, process creation and backend initialization – all of which are costly in terms of time and system resources.

This of course is only a problem if connections are created too often and discarded without reuse. Unfortunately, it’s not uncommon to have a cluster of web nodes running applications written in PHP or other such languages that need to connect to the database once per page load. Batch jobs that rapidly make a bunch of connections in quick succession are also common. Employing connection pooling in such scenarios can drastically reduce the load on your PostgreSQL server and dramatically improve the query latencies.

With connection pooling, the clients connect to a proxy server which maintains a set of direct connections to the real PostgreSQL server. Typically, the clients do not (and should not) realize that they are connected to a proxy server rather than the actual server. The proxy may run on the same node as the client (example, on each web node), in which case the clients can connect to the proxy via Unix domain sockets which have very low connection overhead. Even if the proxy is on another node and the client needs a TCP connection to reach the proxy, the overhead of a new Postgres backend can be avoided.

What is PgBouncer?

PgBouncer is an open-source, lightweight, single-binary connection pooler for PostgreSQL. It can pool connections to one or more databases (on possibly different servers) and serve clients over TCP and Unix domain sockets.

PgBouncer maintains a pool of connections for each unique user, database pair. It’s typically configured to hand out one of these connections to a new incoming client connection, and return it back in to the pool when the client disconnects. You can configure PgBouncer to pool more aggressively, so that it can pick up and return the connection to the pool at transaction or statement boundaries rather than connection boundaries. There are some potentially undesirable consequences to those, however.

You should be able to install PgBouncer with your distro’s package manager:

# RedHat/CentOS/..

$ sudo yum install pgbouncer

# Debian/Ubuntu/..

$ sudo apt-get install pgbouncerIt is also available from the standard Postgres APT and YUM repos, which can be used if your distro’s packages are old or broken.

PgBouncer relies on a main configuration file, typically stored as

/etc/pgbouncer/pgbouncer.ini. You can invoke pgbouncer as a systemd service,

or simply run it even without superuser privileges with the path to this

configuration file.

To give it a spin, let’s create a database db1 and a user user1 on our server:

$ sudo -u postgres psql

psql (10.6 (Debian 10.6-1.pgdg90+1))

Type "help" for help.

postgres=# create user user1 password 'user1pass';

CREATE ROLE

postgres=# create database db1 owner user1;

CREATE DATABASE

postgres=#Clients will connect to the database db1 with the username user1 and

password user1pass. Our goal is to get the clients to connect to PgBouncer

which will proxy and pool the connections to the actual server.

Now let’s create a file (anywhere) with these contents:

[databases]

db1 = host=localhost dbname=db1

[pgbouncer]

listen_addr = 127.0.0.1

listen_port = 16432

auth_file = userlist.txtWe also need to create a “userlist.txt” file in the same directory, with the username and (hashed) passwords of users that PgBouncer will allow to connect. Create “userlist.txt” with the following contents:

"user1" "md5638b81c77071ea624d1ad4adb1433540"The second value is the MD5 of “user1passuser1”, prefixed with “md5”. This is the usual Postgres convention.

Now let’s start PgBouncer in the foreground:

$ /usr/sbin/pgbouncer pgbouncer.ini

2019-02-05 11:46:18.011 10033 LOG file descriptor limit: 1024 (H:1048576), max_client_conn: 100, max fds possible: 130

2019-02-05 11:46:18.012 10033 LOG listening on 127.0.0.1:16432

2019-02-05 11:46:18.013 10033 LOG listening on unix:/tmp/.s.PGSQL.16432

2019-02-05 11:46:18.014 10033 LOG process up: pgbouncer 1.9.0, libevent 2.0.21-stable (epoll), adns: c-ares 1.12.0, tls: OpenSSL 1.1.0j 20 Nov 2018We have now started a PgBouncer that is listening on 127.0.0.1 TCP port 16432,

as well as on the Unix domain socket /tmp/.s.PGSQL.16432. The only “database”

available on this proxy server is db1. The only user that can connect to this

server is user1. Let’s try connecting with psql:

$ psql -U user1 -p 16432 -h localhost db1

Password for user user1:

psql (10.6 (Debian 10.6-1.pgdg90+1))

Type "help" for help.

db1=> select inet_server_addr(), inet_server_port();

inet_server_addr | inet_server_port

------------------+------------------

127.0.0.1 | 5432

(1 row)

db1=>The client (psql) connects successfully to localhost:16432, but you can see that the connection is actually being proxied to localhost:5432.

You can try disconnect and connecting again a few times, then check how many connections are still around on the actual server:

postgres=# select count(*) from pg_stat_activity

postgres-# where datname='db1' and usename='user1';

count

-------

1

(1 row)PgBouncer will not disconnect the actual connection when the client disconnects. You can configure the minimum, maximum and reserved connections that PgBouncer will maintain for each pool in the config file.

Deploying PgBouncer

Where do you install and run PgBouncer? There are different answers, with different advantages:

- On the Postgres server node: You can install it alongside the PostgreSQL server itself, on the same node. The clients connect to the PgBouncer port rather than the Postgres port. This has the effect of an “enhanced” Postgres which does connection pooling internally. You also only have to maintain one copy of the configuration files for PgBouncer. On the other hand, this involves actually running something else also on the PostgreSQL server node, which may not be easy or permitted (firewalls, policies) or even possible (AWS RDS).

- On client nodes: You can install PgBouncer in each client node, for example each web node runs Apache and PHP, and the PHP scripts connect to the local PgBouncer. This has the advantage of not having to disturb the server setup, and the pool configuration can be used to keep the server load predictable. On the flip side, if the number of client nodes are huge, or can vary a lot depending on the load/traffic, the server can be overloaded quickly.

- As a standalone cluster: The third option to have a cluster of independent, stateless PgBouncer nodes, fronted by a TCP load balancer like HAProxy. This setup, while being more complicated than the other two options, provides maximum control and configurability.

Administration

PgBouncer allows users marked as admins to connect to a virtual database called “pgbouncer” and issue commands to control the server and see statistics. To try this, let’s first mark “user1” as an admin by modifying the pgbouncer.ini file:

[databases]

db1 = host=localhost dbname=db1

[pgbouncer]

listen_addr = 127.0.0.1

listen_port = 16432

auth_file = userlist.txt

admin_users = user1Now user1 can connect to the database named “pgbouncer”:

$ psql -U user1 -p 16432 -h localhost pgbouncer

Password for user user1:

psql (10.6 (Debian 10.6-1.pgdg90+1), server 1.9.0/bouncer)

Type "help" for help.

pgbouncer=#From here, you can do various things like enabling or disabling a particular database, inspecting and reloading the configuration, and more:

pgbouncer=# RELOAD;

RELOAD

pgbouncer=# DISABLE db1;

DISABLE

pgbouncer=# ENABLE db1;

ENABLE

pgbouncer=# SHOW FDS;

fd | task | user | database | addr | port | cancel | link | client_encoding | std_strings | datestyle | timezone | pa

----+--------+-------+----------+-----------+-------+----------------+------+-----------------+-------------+-----------+-----------+---

6 | pooler | | | 127.0.0.1 | 16432 | 0 | 0 | | | | |

7 | pooler | | | unix | 16432 | 0 | 0 | | | | |

9 | server | user1 | db1 | 127.0.0.1 | 5432 | 45404395804679 | 0 | UTF8 | on | ISO, MDY | localtime |

(3 rows)Monitoring

There are also commands to show various stats about the PgBouncer, including:

- Per-database stats about query duration, client wait time, network usage, transaction counts

- Per-pool stats about number of active and waiting clients, idle and used server connections

Statistics are retrieved with “SHOW xyz” style commands, like this one to fetch pool-related statistics:

pgbouncer=# SHOW POOLS;

-[ RECORD 1 ]---------

database | db1

user | user1

cl_active | 0

cl_waiting | 0

sv_active | 0

sv_idle | 0

sv_used | 1

sv_tested | 0

sv_login | 0

maxwait | 0

maxwait_us | 0

pool_mode | session

-[ RECORD 2 ]---------

database | pgbouncer

user | pgbouncer

cl_active | 1

cl_waiting | 0

sv_active | 0

sv_idle | 0

sv_used | 0

sv_tested | 0

sv_login | 0

maxwait | 0

maxwait_us | 0

pool_mode | statementFurther Reading

The PgBouncer Home Page has more details about all the various features and configuration options of PgBouncer.

- PgBouncer Home Page

- PgBouncer GitHub Repository

- Postgres Wiki has information about connection pooling

- Pgpool is another option for connection pooling

About pgDash

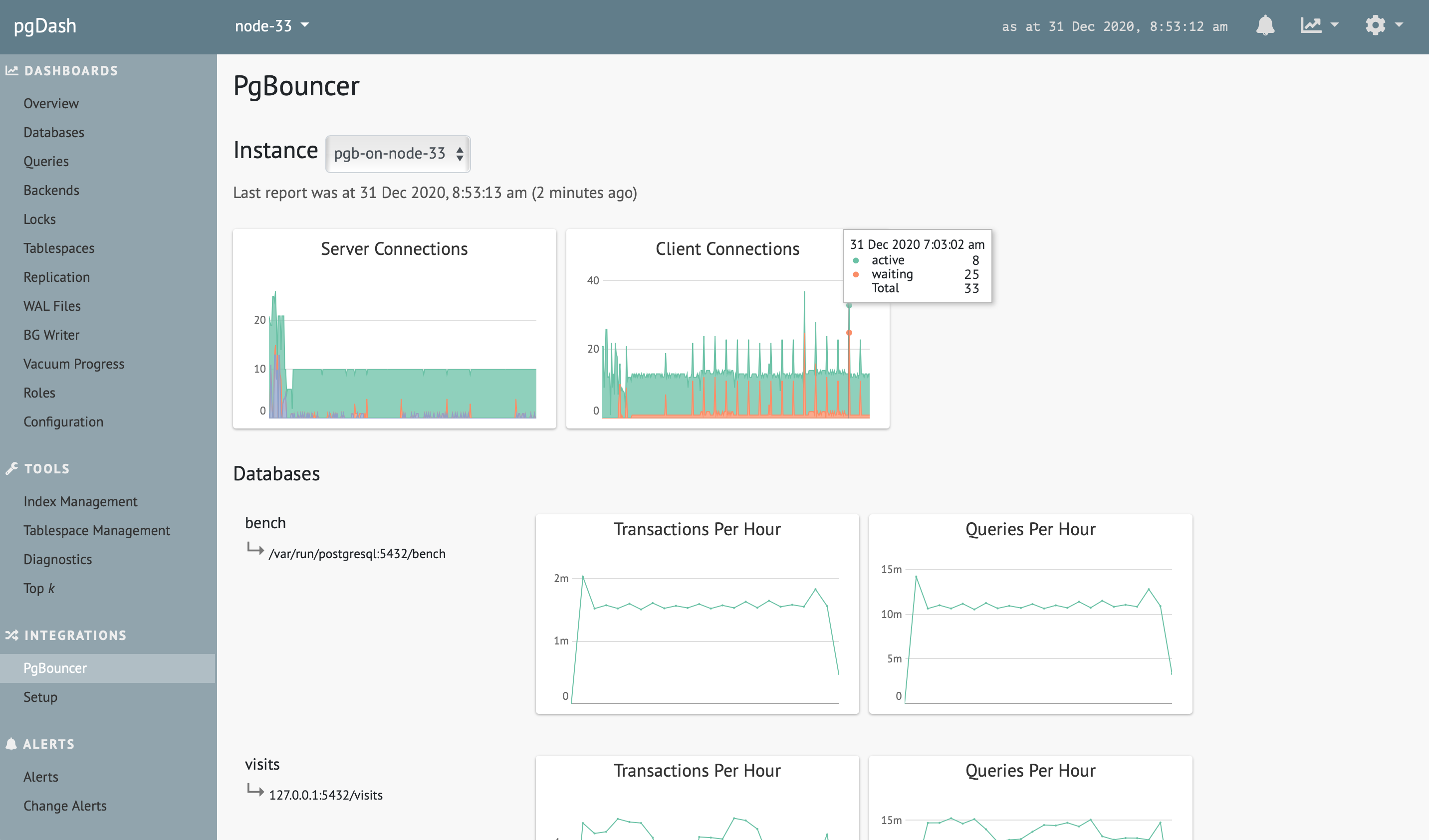

pgDash is a modern, in-depth monitoring solution designed specifically for PostgreSQL deployments. pgDash shows you information and metrics about every aspect of your PostgreSQL database server, collected using the open-source tool pgmetrics. pgDash provides core reporting and visualization functionality, including collecting and displaying PostgreSQL information and providing time-series graphs, diagnostics and query performance improvement suggestions. pgDash includes integrations with AWS Cloudwatch (RDS and Aurora) and pgBouncer, as well as alerting, team sharing and more.

pgDash can monitor your PgBouncer’s pooling efficiency, load and client wait time, and ensure that PgBouncer does not have a negative impact on your applications’ query latency. Learn more here or signup today for a free trial.