PostgreSQL comes with solid, time-tested features that lets you define exactly what should happen when multiple clients try to update the same data concurrently. One of them is the isolation level of transactions.

Read on to learn more about how transaction isolation works in PostgreSQL.

Transactions and Isolation Level

Transactions are the fundamental way to mutate data in an RDBMS. Modern RDBMS allow more than one transaction to run concurrently, and consequently come with a variety of tools – some standard, some RDBMS-specific – for application developers to specify how their transactions should or should not interact with other transactions.

Transaction isolation levels and pessimistic locks are two such tools. Although these are necessary for data integrity and performance, they are unfortunately not intutive to understand or use.

The isolation level of a transaction, in PostgreSQL, can be one of:

- Read Committed

- Repeatable Read

- Serializable

Every transaction has it’s isolation level set to one of these when it is created. The default level is “read committed”.

Note that the SQL standard also defines “read uncommitted”, which is not supported in Postgres. You have to use the nearest, higher level of “read committed”.

Let’s see what these levels mean.

Read Committed

What happens when one (unfinished) transaction inserts rows in a table and the other (also unfinished) transaction tries to read all rows in the table? If the second transaction is able to see the rows inserted by the first, then that read is called a dirty read – because the first transaction can rollback and the second transaction would have read “phantom” rows that never existed.

The read committed isolation level guarantees that dirty reads will never happen. Here is an example:

As you can see, the second transaction could not read the first transaction’s as-yet-uncommitted data. In PostgreSQL, it is not possible to lower the isolation level to below this level so that dirty reads are allowed.

Repeatable Read

Yet another problem is that of non-repeatable reads. These happen when a transaction reads a row, and then reads it again a bit later but gets a different result – because the row was updated in between by another transaction. The read has become non-repeatable, as shown in this example:

To fix this problem, set the isolation level of the transaction to “repeatable read”. PostgreSQL will then ensure that the second (or any) read will also return the same result as the first read. Here is the same scenario at the upgraded isolation level:

Note that the isolation level was specified along with the BEGIN

statement. It

is also possible to specify this at connection level (as a connection

parameter), as a configuration paramter (default_transaction_isolation)

and using the SET TRANSACTION statement.

Serializable

The next isolation level addresses the problem of lost updates. Updates performed in one transaction can be “lost”, or overwritten by another transaction that happens to run concurrently, as shown here:

Here the second transaction’s UPDATE blocks, because PostgreSQL places a lock to prevent another update until the first transaction is finished. However, the first transaction’s change is lost, because the second one “overwrote” the row.

If this sort of behavior is not acceptable, you can upgrade the isolation level to serializable:

At this level, the commit of the second transaction fails. The second transaction’s actions were based on facts that were rendered invalid by the time it was about to commit.

While serialization provides the highest level of safety, it also means that the application has to detect such commit failures and retry the entire transaction.

About pgDash

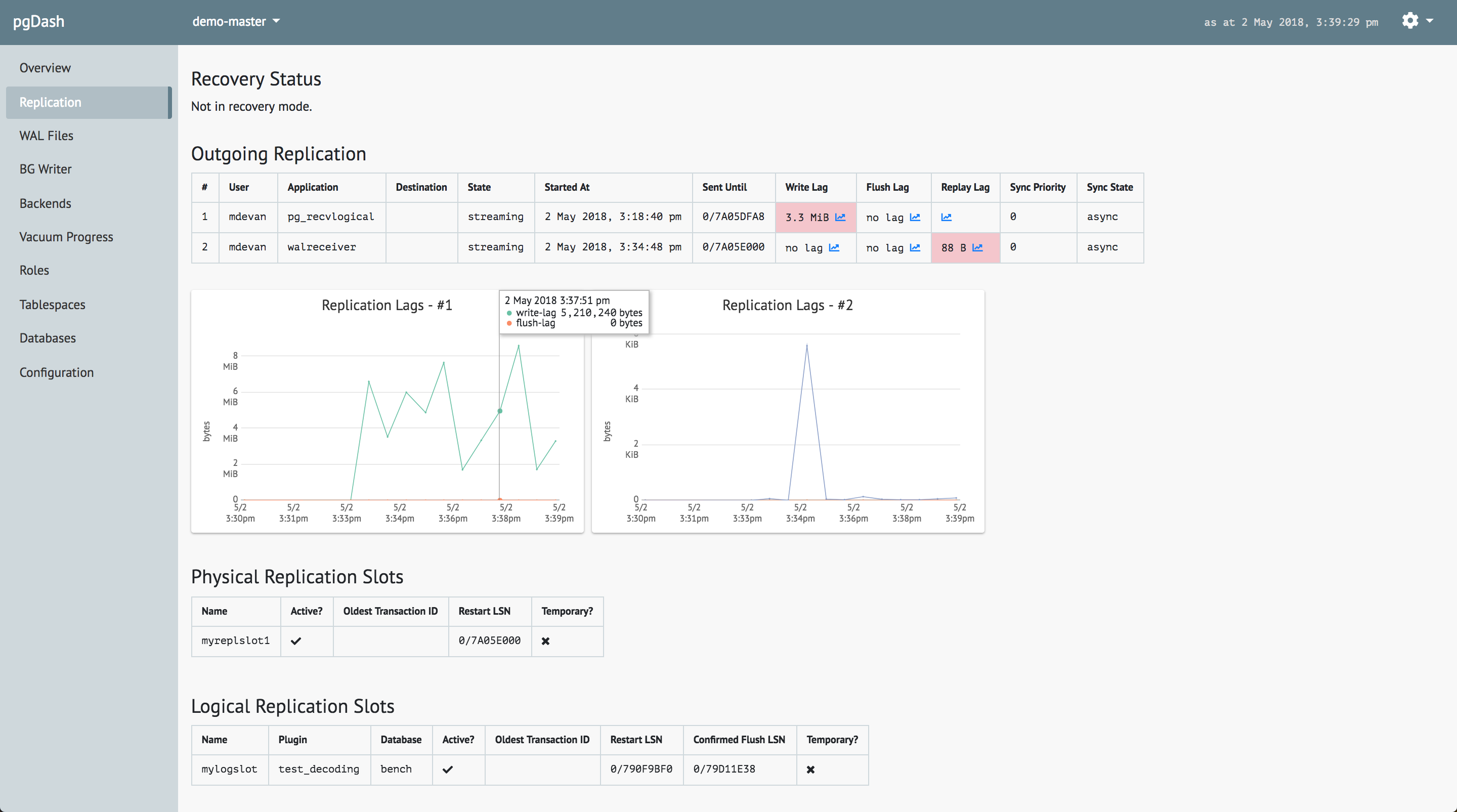

pgDash is a modern, in-depth monitoring solution designed specifically for PostgreSQL deployments. pgDash shows you information and metrics about every aspect of your PostgreSQL database server, collected using the open-source tool pgmetrics.

pgDash provides core reporting and visualization functionality, including collecting and displaying PostgreSQL information and providing time-series graphs, detailed reports, alerting, teams and more.